APMDC BUILDS A FUTURE-READY ENTERPRISE WITH DATAEVOLVE

PRIMARY DRIVERS

The Customer, Andhra Pradesh State Mining Development Corporation wanted to build and deploy an enterprise platform in AWS public cloud. Manual creation of an environment left doors open to a multitude of most likely minor but potentially quite important human errors. As the systems and the data increased, managing the storage and compute services was getting difficult.

BUSINESS CHALLENGES

As the amount of data grew, there was no guidelines in place for efficient synthesis and optimal management of storage and compute resources. Without consistently enacting guidelines and automating processes, APMDC struggled to manage their data at scale, resulting in issues around security, compliance, and application performance.

- The manual effort was high in creation of the infrastructure services like S3, EBS, EC2.

- The AWS services created were not with the security best practices, which paved the way for security risks

- There was no standard followed in naming, tagging strategies and as a result grouping and pricing the resources got difficult.

- There was no proper backup mechanism for these services created in AWS, which paved the way for the potential risks of data loss.

- The implementation and tracking of cost reduction solutions, monitoring of various services and operational support was the need of the hour.

SOLUTION PROPOSED

- Automated process for creating EC2, EBS and S3 for end users with all required AWS Best Practices.

- Provision S3 bucket with encryption, versioning, right tagging, restricted bucket policy, access log enabled and life cycle rules from predefined templates.

- Provision EBS volumes with encryption, tagging and provide option to select the right volume type based on application IOPS and throughput, from the predefined templates.

- Provide a cost-effective backup solution for the data in EBS volumes through root snapshots and EMC networker backups.

- Optimization and Service improvements in Dev/Prod environment in frequent basis by monitoring the usage of S3 and EBS volumes. Have automated clean-ups in place for unattached volumes, and older snapshots.

- Monitor the Smart Systems Tool metrics, trusted advisor for EBS and S3 optimization.

- Provision to transition objects from S3 Standard to Intelligent Tiering to reduce costs.

- Right costing strategy for all the resources provisioned by effectively managing tags for the resources.

- Full integration with Customer’s existing IT operational support service desk framework. Proactive operational.

- Capabilities and provide entry-level support for all cloud related requests.

- Follow best practices, compliance principles in operational requests, follow Incident/Change/Configuration management process in operational activities, accommodate operational SLA’s and 24*7*365 support plan.

BUSINESS BENEFITS

- 99.9% availability of the enterprise-wide Cloud Compute & Data Analytic platform.

- Reduction of infrastructure creation time by 60%. Reduced time and cost of deploying systems; as do not have to rebuild security configurations or get them approved by security teams when there are “built in” templates, and bootstrapping scripts.

- Operation Cost reduced by 40%. Reduced manual security, infrastructure creation works by centrally managing configuration, and discouraging ad hoc work

- Due to the presence of effective backup solutions, the risk of data loss decreased by 90%.

- Improved transparency- Clear visibility into how every system is configured for security at any point in time, due to inbuilt security management.

- Cost Tracking – by ensuring right tagging for all resources. Transparency on Cost spending vs Savings.

- Self-healing based on configured use cases in Ignio

- Highly Efficient Operating Support in place with 24*7*365 support plan.

Primary Storage

- S3 Standard:

- For maintaining EMC networker backup data, logs, application scripts which should be readily available.

- All S3 buckets are encrypted

- Al S3 buckets have versioning enabled by default

- All S3 buckets have tagging in place.

- All S3 buckets have restricted bucket policy added.

- None of the S3 buckets is public

- All S3 buckets have access logging enabled and log bucket have strict restrictive bucket policy.

- Life cycle rules are applied on specific S3 buckets for which we need to filter data. The objects are transitioned to Intelligent Tiring or expired depending on the requirement

- Cross-region replication in place for S3 buckets, which require similar data in different region for disaster recovery.

- S3 bucket policies are restricted to all by default and whitelisted for specific VPC s, Roles, Users and IP as required.

- VPC endpoint is in place to access from specific VPC

- Config rules are in place to alert ops team on any suspicious activities in S3.

- Access logs are enabled which are queried using Athena for further analysis.

- S3 Intelligent Tiering:

From S3, based on life cycle policies objects are moved to Intelligent Tiering after specific days or are expired. This is a cost-effective solution for objects whose access patterns are unknown. This storage class does not create any delay in retrieving the objects.

- Amazon EBS

- EBS is used as the storage for all EC2 for all applications and databases that are currently deployed in IaaS model.

- EBS volumes selected are SSD General Purpose, Provisioned IOPS, Throughput Optimised(st1) or Cold HDD (sc1) based on the application requirement.

- All EBS volumes are KMS encrypted. All snapshots from the EBS are thus encrypted as well.

- All EBS volumes are tagged upon creation with all cost centre, application details.

- Trusted advisor findings on low utilisation of volumes are used for optimization activities where team reached out to customer with data.

- Alert emails are sent to Ops team if the EBS utilisation is high from Smarts System Tool for which the Ops Team upon discussion with the concerned team takes required action.

- IOPS of various volumes are adjusted upon studying the metrics from Smart System Tools.

- Clean-up activities in place for removing EBS volumes that are not attached to any instances. A lambda written in python boto3 takes a list of unattached volumes to the support team on regular basis. The support team analyses these volumes and upon approval deletes the same.

- Clean-up lambda in place to remove the snapshots of root volumes after 40 days.

- Performance and Metrics monitoring

- Trusted Advisor is used. The finding on EBS/S3 are acted upon on regular basis.

- Basic CloudWatch metrics like diskusedpercent, VolumeQueueLength are used to monitor.

- Smart Systems Tool is used to get the detailed metrics and alert on Volume Utilisations and to scale the volume size or IO.

Logging

- CloudTrail

Logs are stored in same account in to a CloudWatch log group. Logs are also shipped in to a S3 bucket in same account itself. S3 buckets are all versioned to prevent accidental delete of logs. Log buckets have strict restricted bucket policy in place where root users only have access to modify the policy. Athena is used to query all cloud trail logs from S3.

- Access Logs

All access logs -Access logs are transferred to Log bucket of that region which has strict permissions. Athena is used to query all access logs from S3.

- Backup/Restore

- The entire EC2 instances are backed up using Networker including the EBS volumes.

- Full backups conducted on Fridays with incremental backup from Saturday to Thursday.

- In addition, AWS scheduled jobs take snapshots of all root volumes on weekly basis.

- Retention period for these volumes are 40 days.

- Root snapshots will be used to recreate a machine and data will be restored via networker.

- The snapshots are stored in S3.

- Restoration

- If the root volume has issue or if the instance shows 1/2 status, root volume is removed and the new volume is created from the snapshots and the instance is restored.

- If there are any loss of files, they are restored with the help of the backups created by the EMC Networker.

- Database can be restored by the backups created by the networker.

- Networker also preserves the metadata.

- Networker is a highly cost-effective solution when compared to other backup solutions

- (BCDR)

- RTO is 2-7 days and RPO is 24 hours for Tier 3 applications in AWS.

- Ohio is the DR region.

- Any snapshots created from our primary region will also send a copy of the snapshot to Ohio region and will be available for 40 days.

- Failover happens to secondary region using these snapshots for achieving RTO/RPO.

- In addition, root volume snapshots are created by a lambda and the same is pushed to the DR region

- This root volumes are used to create the machine and the data is copied from the EMC networker backups

- CloudFormation templates are in place to create a few infrastructures in secondary region.

- CRR (Cross-region Replication) set up in place for specific buckets, which require backup in different region. Thus, data in S3 is preserved

- Versioning is enabled by default in all S3 buckets to prevent loss of data in the same region.

- ARCHIVAL

S3 Intelligent Tiering is used to archive the data intelligently based on usage patterns. This is a cost-efficient solution when the access patterns are unknown, and the objects should be readily available and need not be archived for very longs duration.

LESSONS LEARNT

- Access logs were stored and kept in S3 and accessed only during issue. Now Ops team on a regular basis uses Athena to query access logs and understand the access patterns

- Usage of Reserved instances provide better savings and thus they were leveraged at many places.

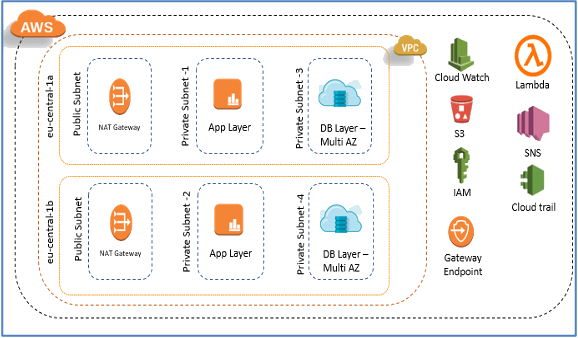

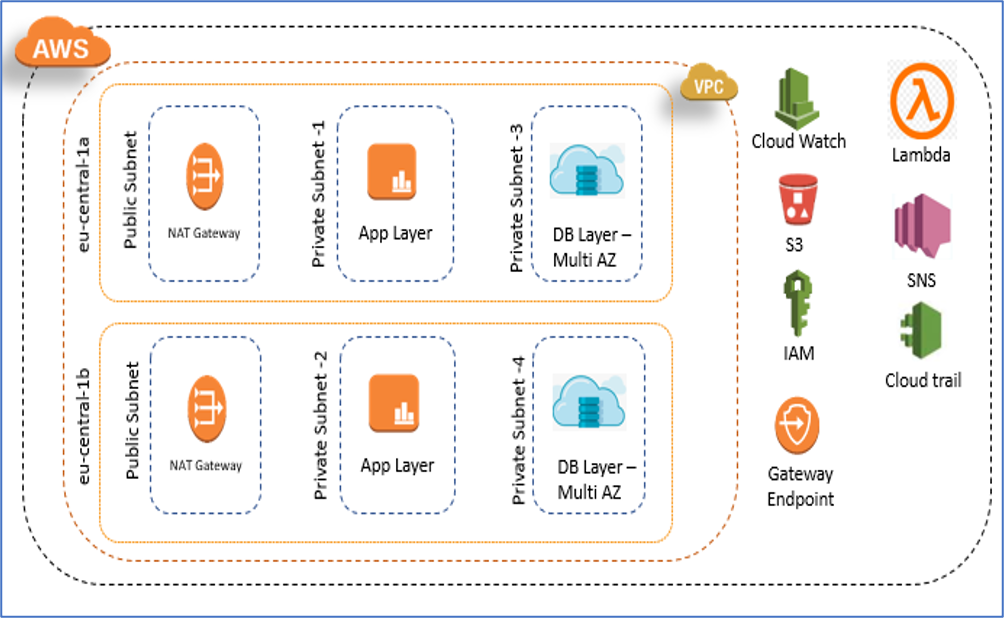

ARCHITECTURAL DIAGRAM

Recent Posts

- How we revamped all GAIL Gas intranet applications and rolled out new applications.

- UX Design Trends: That Will Rule 2021

- TELANGANA STATE GOVERNMENT TRUSTS DATAEVOLVE FOR THEIR CLOUD

- APMDC BUILDS A FUTURE-READY ENTERPRISE WITH DATAEVOLVE

- AAROGYASRI BUILDS A FUTURE-READY INFRASTRUCTURE WITH DATAEVOLVE